Anthropic quietly removed MagicDocs from Claude Code

MagicDocs — the internal Anthropic feature that auto-maintained architectural documentation inside Claude Code — was removed three days ago in v2.1.91. Anthropic’s changelog doesn’t mention it. The removal was confirmed by the Piebald-AI automated extraction bot, which tracks every prompt change across Claude Code releases. The same release also removed the /pr-comments slash command.

What was MagicDocs?

MagicDocs was one of the more interesting discoveries in the March 31 source maps leak. Several leak analyses highlighted it as an example of how Anthropic uses Claude Code internally in ways not available to the public.

The feature worked like this: after any model response involving tool calls, a postSamplingHook would fire a subagent to update markdown files bearing # MAGIC DOC: headers. The subagent had access to the Edit tool only — it could modify the doc it was given but nothing else — and its instructions emphasized a specific philosophy: terse, high-signal, current-state-only documentation. No changelogs, no exhaustive API lists, no code walkthroughs. Architecture, entry points, gotchas, and design rationale.

It was never available externally. The initMagicDocs() function was gated behind a hardcoded getUserType() === "ant" check that the bundler compiled to return "external" in every public build. The code was present in the npm package but completely inert — setting an environment variable wouldn’t have helped. The gate was baked into the binary at compile time.

MagicDocs existed in the public eye for roughly 48 hours. The source maps leaked on March 31. The code was removed on April 2. I spent part of that window engineering my own version from the extracted prompts and the Claw Code reimplementation.

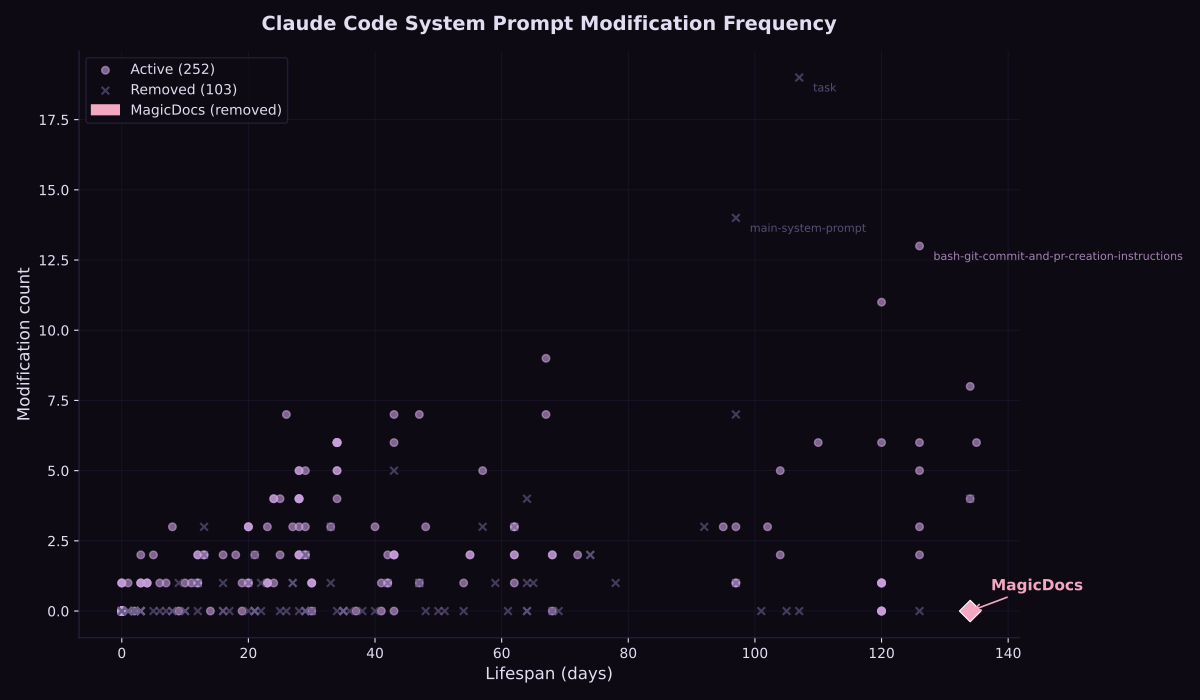

Zero edits over 134 days of Claude Code prompt tracking

Piebald AI has been tracking Claude Code’s system prompts since November 19, 2025 — automatically extracting and diffing them with every release. Using their git history, we can see how often each prompt was modified over its lifetime.

Five prompts have existed since the repo began tracking. Of those five, MagicDocs was modified the fewest times:

| Prompt | Content modifications | Status |

|---|---|---|

| ReadFile tool description | 8 | active |

| Write tool description | 6 | active |

| Edit tool description | 4 | active |

| /pr-comments slash command | 4 | removed in v2.1.91 |

| MagicDocs agent prompt | 0 | removed in v2.1.91 |

These counts exclude repo maintenance commits (initial setup, metadata batch updates) and count only version-tagged Claude Code releases that modified the prompt content. MagicDocs was never modified by Anthropic — not once in the 134 days Piebald AI was tracking Claude Code releases.

The two prompts removed in v2.1.91 are the two least-iterated original prompts. That’s probably not a coincidence.

Why it probably failed

I want to be clear that this next section is somewhat speculative. We don’t have internal communications from Anthropic explaining the removal, and the changelog is silent. But the evidence points in a clear direction.

Both removed prompts read like they were written before Claude Code had a skills framework. The /pr-comments prompt opens with “You are an AI assistant integrated into a git-based version control system. Your task is to fetch and display comments from a GitHub pull request.” It then micromanages five numbered steps of gh api calls that Claude already knows how to make. In modern skills produced through test-driven design, unnecessary instructions are excised, but that isn’t the case with older prompts. (This is the topic of a forthcoming article on context engineering I hope to publish this week.)

MagicDocs had a similar problem at a deeper level. It was a workflow baked into an agent prompt dispatched by a postSamplingHook. There was no way to test whether the prompt actually produced good documentation. No red/green/refactor cycle. No feedback loop at all. In my own MVP testing of a MagicDocs implementation, I found that subagents would modify or even delete out-of-scope files — a problem the original prompt presumably also had, given its lack of iteration.

Meanwhile, Claude Code’s skills framework has matured into a proper solution for both use cases. Skills arrive at the point of action, fresh in context and testable with subagent pressure scenarios. The memory system — including dream consolidation — handles persistent cross-session knowledge with built-in pruning and maintenance. The iterative, self-correcting nature of MagicDocs is strikingly similar to what memory and dreaming now provide, suggesting that the functionality MagicDocs aimed for has been absorbed by these newer mechanisms.

I considered whether Anthropic might have simply changed how internal features are bundled — moving MagicDocs behind a compile-time feature flag rather than removing it. But other internal-only prompts remain visible in the extracted prompts repo, and strings analysis of the v2.1.91 binary confirms that both the MagicDocs prompt and its code infrastructure are completely absent. This wasn’t a gating change. The feature was excised.

So why did MagicDocs’ removal coincide with the Claude Code leak? One reasonable explanation is that without an internal advocate, the feature was forgotten until people reported on it in the context of the leak.

Auto-maintained documentation is a solid idea that many people are exploring. But baking it into an untestable agent prompt that nobody iterated on for 134 days is telling in a codebase that was rapidly developing better mechanisms for exactly this kind of work.